This morning we have pushed the JAC EOM by about 0.6mm (using ~25 thou thick washers) in -Y direction, following the finding of last Friday (alog 89158).

After that the beam was good on the input side plate (the beam is offset in +Y direction by 0.1mm) and was OK on the output side plate (0.5mm offset in -Y direction).

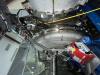

The beam position on the crystal itself should be ~0.13mm in +Y direction on the input face and ~0.36mm in +Y direction on the output face. The angle between the nominal path and the actual path outside of the crystal is about 0.6 degrees. See pictures and cartoon.

Calculation depends on the refractive index, I assumed n~1+deflection/wedge=1+2.35/2.85~1.85, but using 1.85+-0.5 instead won't change anything in a meaningful manner.

This is acceptable, the beam is more than 1.5mm away from the side face of the crystal, cannot remember the beam radius but it should be smaller than 600um if FDR is still valid, so it might be 2.5 beam radius or maybe more.

IFO REFL beam check was done.

After Jennie restored the IMC alignment to post-IMC axes check state, IMC was locked, PRM was alignmed and the IFO refl beam in HAM1 was quickly checked to see if the REFL air path somehow interferes with the new POP periscope stiffener. It didn't.

JM3 swap is ongoing.

Partly in the interest of time, I asked others to go ahead. Rahul and the team are working on it right now.

Yet to be done items:

- Ghost beams and beam dumps (important).

- Forward-going beam of S-pol (wrong polarization).

- There is a reflection of L3 coming back, reflected by JM3 and going high toward JM2.

- We haven't found the septum reflection yet.

- JAC RF locking.

- Modulation depth measurement with the new crystal.

- It should be done at some point, but after JM3 swap is fine, I'm not worried about huge differences relative to the old crystal.

- Beam profile.

- Should be done in IOT-2L but the beam quality is not as bad now due to new crystal and the mode matching seems to be pretty good, so this is not a priority. Could be done after JM3.

I calculated the mode-matching before we replaced JM3 and got a limit of 0.26 % for the mode-mismatch as the TM20 mode was hidden in the noise at 100mW input power. We turned up the whitening gain to 42 dB from 30dB to have a better chance and still couldn't see it.

This plot shows the zoomed out ndscope of the TM00 modes and this one shows the max value for TM20.

After JM3 was installed and its position, pitch and yaw had been tuned by Rahul and Betsy to optimise the pointing through our HAM1 irises, Keita, Jenne and I tried to tweak up the alignment with JM3 sliders.

I have left the sliders near here and could not get them much better.

I measure the mode-matching to be 0.43 % with this alignment which is worse by at least a factor of two.

See photo of TM20 mode here.

The 10 and 01 modes are much higher than they were previously, so we will need to do some alignment of the fixed JM2 or JAC_M3 mirrors.

Note for the MM measurement we were accidentally scanning with the MC2 length and the PSL laser frequency so this might make the measurement confusing.

I closed the light pipe and turned up the purge air before going home.

Let me point out that the term “mode matching” used in Jennie’s post is not exactly accurate in this case; it would be more precise to refer to it as the TEM20/02 mode peak fraction. Since there is a large misalignment, the second-order modes are also enhanced. Therefore, that contribution should be subtracted before attributing the remaining fraction to mode mismatch.